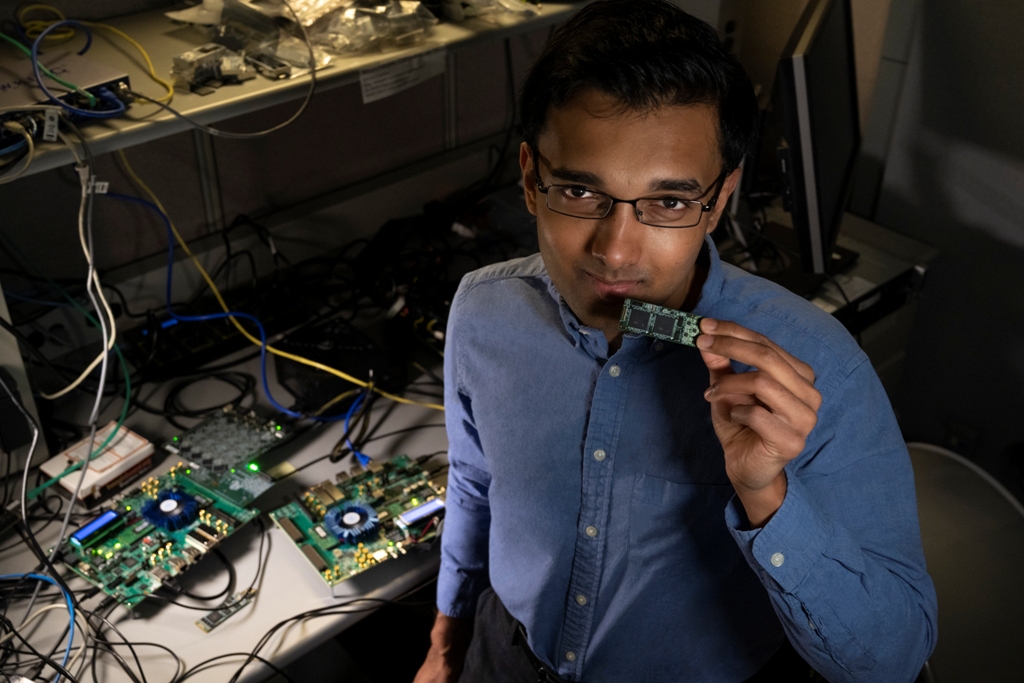

The Future of Neuromorphic Computing: Nabil Imam, Intel Senior Research Scientist, Explains Neuromorphic Computing

Nabil Imam, a senior research scientist in Intel Labs’ neuromorphic computing group, works with olfactory neurophysiologists at Cornell University. “My friends at Cornell study the biological olfactory system in animals and measure the electrical activity in their brains as they smell odors,” explains Imam, who has a doctorate in neuromorphic computing. “On the basis of these circuit diagrams and electrical pulses, we derived a set of algorithms and configured them on neuromorphic silicon, specifically our Loihi test chip.” Loihi is Intel’s neuromorphic computing chip that applies the principles of computation found in biological brains to computer architectures.

It’s in the news: Today, Nature Machine Intelligence profiled research by Intel and Cornell University scientists who are building the mathematical algorithms. With researchers’ guidance, Loihi rapidly learned neural representations of 10 different odors.First, how we smell: If you pick up a grapefruit and take a whiff, that fruit’s molecules stimulate olfactory cells in your nose (the word olfactory originates from Latin’s olfactare, which means “to smell”). The cells in your nose immediately send signals to your brain’s olfactory system where electrical pulses within an interconnected group of neurons generate a smell’s sensation. Whether you’re smelling a grapefruit, a rose or a noxious gas, networks of neurons in your brain create sensations specific to the object. Similarly, your senses of sight and sound, your recall of memory, your emotions, your decision-making each have individual neural networks that compute in particular ways.

Loihi learns to detect distinct odors in complex mixtures: Imam and team took a dataset consisting of the activity of 72 chemical sensors in response to 10 gaseous substances (odors) circulating within a wind tunnel. The sensors’ responses to the individual scents were transmitted to Loihi where silicon circuits mimicked the circuitry of the brain underlying the sense of smell. The chip rapidly learned neural representations of each of the 10 smells, including acetone, ammonia and methane, and identified them even in the presence of strong background interferents. Your smoke and carbon monoxide detectors at home use sensors to detect odors but they cannot distinguish between them; they beep when they detect harmful molecules in the air but are unable to categorize them in intelligent ways.

Future applications: Imam says the chemical-sensing community for years has looked for smart, reliable and fast-responding chemosensory processing systems, otherwise called “electronic nose systems.” He sees the potential of robots equipped with neuromorphic chips for environmental monitoring and hazardous materials detection, or for quality control chores in factories. They could be used for medical diagnoses where some diseases emit particular odors. Another example has neuromorphic-equipped robots better identifying hazardous substances in airport security lines.

Adding more senses in the future: “My next step,” Imam says, “is to generalize this approach to a wider range of problems — from sensory scene analysis (understanding the relationships between objects you observe) to abstract problems like planning and decision-making. Understanding how the brain’s neural circuits solve these complex computational problems will provide important clues for designing efficient and robust machine intelligence.”

Challenges to overcome: There are challenges in olfactory sensing, Imam says. When you walk into a grocery, you might smell a strawberry, but its smell might be similar to that of a blueberry or a banana, which induce very similar neural activity patterns in the brain. Sometimes it’s even hard for humans to distinguish between one fruit from a blend of scents. Systems might get tripped up when they smell a strawberry from Italy and one from California, which might have different aromas, yet need to be grouped into a common category. “These are challenges in olfactory signal recognition that we’re working on and that we hope to solve in the next couple of years before this becomes a product that can solve real-world problems beyond the experimental ones we have demonstrated in the lab,” Imam says. His work, he contends, is a “prime example of contemporary research taking place at the crossroads of neuroscience and artificial intelligence.”